A Maturity Bug: Confusing an Executed Process with a Consolidated One

Gabriel Tavares

Verified Author

Verified Author

23 April

For a long time, digital product design was built around a simple principle: guiding the user through a flow.

This logic shaped much of UX/UI practice: linear journeys, conversion funnels, hierarchical menus, predictable steps, and screens with clearly defined functions. This model is still relevant, especially in regulated, transactional, and operational systems. But with the rise of generative experiences and AI-powered products, it is starting to show an important limitation.

That limitation is this: people don’t think in screens. People think in intent.

They don’t want to “go through step 1, then step 2, and then click confirm.” They want to solve something, compare options, make a decision, produce a result, delegate a task, and save time.

That’s why the central discussion in product design is increasingly moving away from fixed flows and toward designing experiences around intent.

Designing for intent means creating experiences that respond to what the user is trying to achieve, not just the path the system was preconfigured to enforce.

This may sound subtle, but it fundamentally changes the design process.

In the traditional model, the main question is:

“What’s the next screen?”

In the intent-driven model, the question becomes:

“What is this person trying to accomplish right now—and what’s the best way to help them get there?”

This shift moves the focus of design:

In generative environments, this becomes even more evident. The system no longer depends only on pre-built screens. It operates through patterns, signals, inference, and context. That means design is no longer just about shaping surfaces, but also about defining how the system understands, prioritizes, and responds.

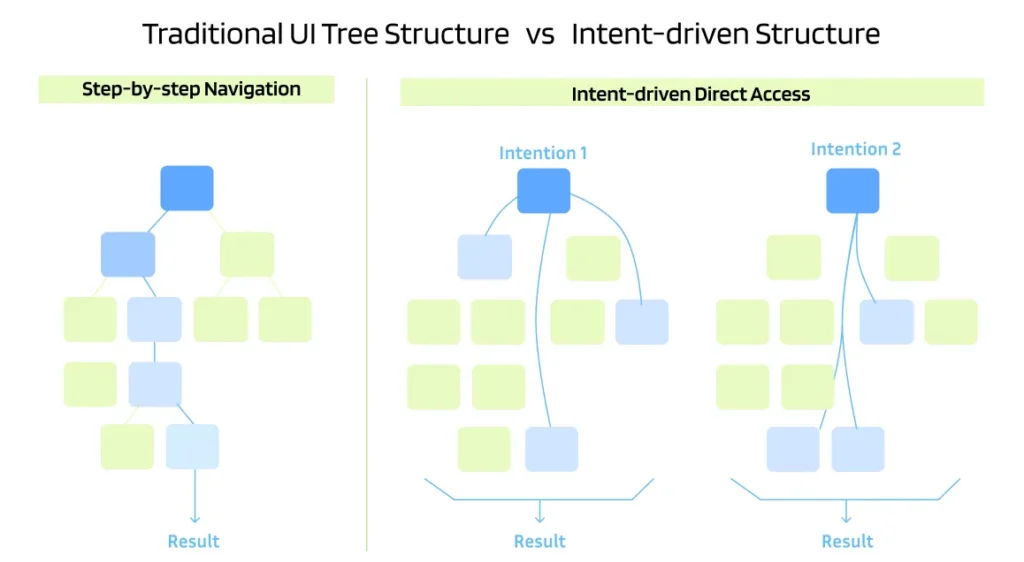

A simple way to understand this shift is by comparing two experience models:

In this model, users navigate through a hierarchy of pages and states to reach a result. It’s step-by-step, predictable, and useful when the ideal path can be defined in advance.

It works well when:

Here, the system starts from the user’s goal and directly accesses relevant parts of the experience. Instead of forcing everyone through the same path, it can recombine content, prioritize different steps, and adapt responses based on context and intent.

The biggest change isn’t just in the interface. It’s in the experience architecture.

Design now models:

In other words, design expands from shaping the experience to defining the understanding behind it.

In AI-driven experiences, user intent can be captured in two complementary ways:

This is when the user clearly states what they want.

Examples:

In this scenario, design reduces ambiguity and helps users express their needs through:

This is when the system infers the goal based on behavioral and contextual signals, such as:

This increases the power of the experience, but also the risk of error. That’s where design becomes critical: making interpretation transparent and adjustable.

If AI gets it wrong, users must be able to:

There’s a common misconception that designing for intent means abandoning funnels, journeys, and flows. That’s not the case.

Journeys still matter. The difference is that they are no longer the only dominant structure. They coexist with a more flexible and adaptive layer.

Instead of designing a single ideal path, designers must consider:

This is especially relevant in products where value comes not from completing steps, but from helping users reach decisions, insights, or actions faster.

A practical way to structure intent-driven experiences is by identifying the dominant type of intent. In digital contexts, four types are common:

The user wants to learn, understand, or explore.

Example:

“I want to understand the differences between these options”

Design response: clarity, progressive depth, comparisons, explanations, and discovery support.

The user wants to reach a specific destination.

Example:

“I want to go straight to that feature”

Design response: direct access, fast recognition, shortcuts, and minimal friction.

The user is evaluating alternatives.

Example:

“Which option makes the most sense for me?”

Design response: assisted comparison, clear criteria, contextual recommendations, and evidence.

The user wants to complete a specific action.

Example:

“I want to buy / approve / submit”

Design response: speed, confirmation, security, error prevention, and predictability.

This framework helps product teams answer a key question:

“What kind of help does the user actually need right now?”

When intent becomes the focus, designers move beyond screen design and into more systemic thinking.

This doesn’t replace core UX/UI principles like clarity, consistency, accessibility, and usability. It builds on top of them.

In practice, the scope expands to include:

It also requires closer collaboration with product, engineering, and data teams. Because in intent-driven systems, experience quality depends heavily on how well the system interprets users.

What separates a mature generative experience from one that is merely impressive is how it handles errors.

In inference-based systems, errors are not exceptions—they are expected.

AI may:

That’s why designing for intent also means designing for graceful failure.

A well-designed system doesn’t need to be perfect. It needs to let users recover control with minimal effort.

Key principles:

In traditional experiences, success is often measured by:

These still matter, but they’re no longer enough.

The main question becomes:

Did the experience help the user achieve their goal with clarity, confidence, and less effort?

That requires more behavior-oriented metrics:

This reframes design evaluation from comparing screens to assessing real value delivery.

When an AI experience underperforms, the issue usually lies in one of two areas:

The interface may be confusing, poorly structured, or lacking refinement capabilities.

The system may misinterpret signals, lack context, or fail to capture intent effectively.

This distinction is critical. Many teams try to fix understanding problems with visual tweaks. But in intent-driven systems, the issue often lies before the interface—in how the system understands the user.

Great design operates in both layers:

The real power of this shift isn’t superficial personalization. It’s the ability to build products that respond more accurately to what each user actually needs in a given moment.

Different users should experience different paths—not for aesthetic reasons, but for intent alignment.

If we treat everyone the same, we may deliver consistency—but not relevance.

And in AI-driven products, relevance is no longer a differentiator. It’s a requirement.

Designing for intent is not a complete break from traditional UX/UI. It’s a shift in focus.

Flows, journeys, and interfaces still exist. But they are no longer the center of the strategy. They become tools within a broader capability: helping systems understand goals, adapt responses, and drive outcomes.

This shift requires designers to expand their role:

In the end, the biggest change isn’t technological. It’s mental.

We are moving from designing what users see

to designing how systems understand.

And that changes everything.

References: NNGroup Generative UI and Outcome-Oriented Design, Design bootcamp Jobs-to-Be-Done and Intention Mapping: Translating Human Needs into Agent Actions, Agentic ux Mapping User Intent to Prompt: AI-native design experience